IdeaOps is a unified "spatial" operating system engineered to merge the fractured workflows of design, development, and product strategy

By replacing traditional dashboard architecture with a context-aware, floating command interface, the platform empowers cross-functional teams to generate prototypes, automate documentation, and write production-ready code through a single, natural language input.

The initial objective was to streamline product development, but auditing high-velocity teams exposed massive operational friction caused by siloed toolchains.

The Silo Problem: Modern product development is fundamentally fractured. Designers operate in canvas tools, developers in code editors, and product managers in documentation apps.

The Translation Penalty: Upwards of 40% of operational "work" consisted merely of translating assets between these disconnected tools—such as turning a meeting note into a development ticket, or a static design into Swift code.

Dashboard Fatigue: Traditional SaaS architectures force users to navigate complex, destination-based interfaces rather than integrating seamlessly into their existing desktop environments and thought processes.

Before defining the system architecture, it was necessary to deconstruct the friction of cross-functional collaboration

Intensive analysis of high-performance teams mapped the distinct requirements of different operations ("Ops") roles within a single ecosystem.

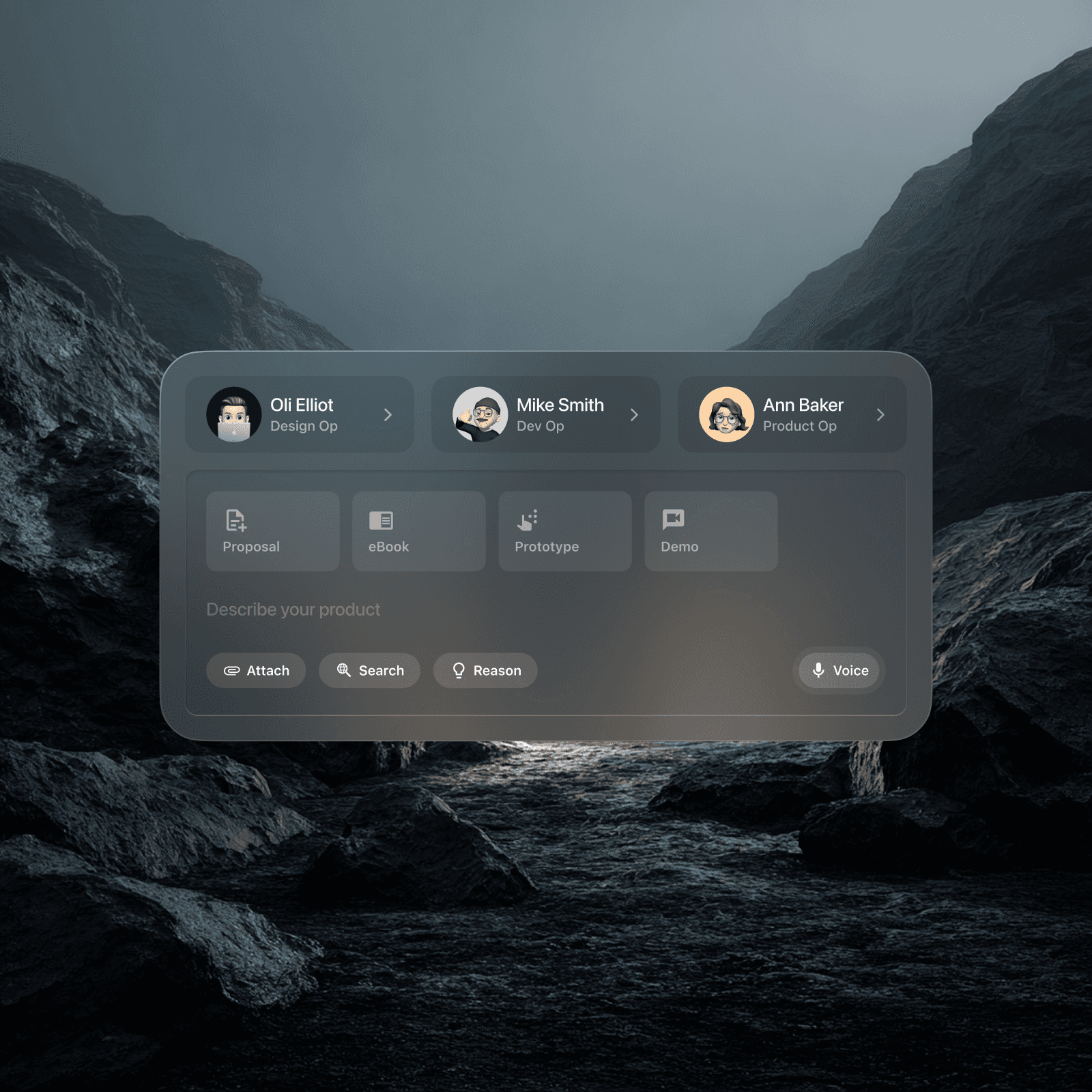

The core objective became engineering a "Product OS" that sits entirely above existing tools—a unified layer where Designers, Developers, and Product Managers collaborate using the exact same interface to trigger discipline-specific outcomes. Personas were established to balance the rapid asset generation needed by Design Ops, the clean code syntax required by Dev Ops, and the strategic synthesis demanded by Product Ops.

Bypassing traditional flat material design, the process moved directly into engineering a radical spatial architecture

To support the modular structure, the design system focused on atomic versatility and depth.

Spatial Command Interface: The traditional "page" metaphor was completely removed. The architecture utilises floating, glass-morphic cards that overlay the user's existing desktop, creating an immersive "Heads-Up Display" (HUD). Heavy background blurs and multi-layered shadows physically ground the UI in 3D space.

The "Omni-Bar" Engine: A central input field acts as the system's brain, seamlessly combining text input, voice dictation, and file attachment into a single, context-aware component.

Split-View Hybrid Environment: A dynamic coding environment was engineered to allow real-time code-to-canvas translation. As SwiftUI logic is typed, the system simultaneously generates a pixel-perfect, interactive mobile preview, allowing for natural language refinement (e.g., typing "Add a search filter" to instantly inject the correct code block).

The primary advantage of generating functional prototypes is the ability to validate high-level interaction models early

Early iterations resembled a standard SaaS dashboard with sidebars and tables. However, beta testing with power users revealed a critical insight: for high-performance teams, "UI is friction."

Because the architecture was adaptable, a massive structural pivot was executed. The complex dashboard was abandoned entirely in favour of a "Command-K" style spatial interface. The platform was redesigned around "Triggers" and "Actions" rather than navigation menus.

For example, through ambient meeting recording, the AI listens to stakeholder conversations in real-time and instantly categorises the audio into actionable blocks (compliance requirements, risk questions, automatically linked resources) without the user ever clicking through a menu. This iteration successfully transformed the tool from a destination into a seamless extension of the user's workflow.

Looking back at the development of IdeaOps, the most profound lesson was that designing a "Spatial UI" (interfaces that float rather than sit on a page) requires absolute context awareness

The system must fundamentally understand what the user is looking at to be useful.

The critical takeaway was that reducing the visual interface to a simple input bar exponentially increases the complexity of the backend architecture—forcing the AI to interpret user intent with extreme accuracy. By challenging the paradigm that enterprise productivity tools must look like spreadsheets, the platform established a new visual standard for "Pro Tools."

Automating the translation between raw meeting notes and technical requirements saved an estimated 12 hours per week per product manager, while "Time-to-Prototype" dropped by 70%. Future iterations will explore AR headset integration to take the operating system fully off the desktop screen and into native spatial computing.